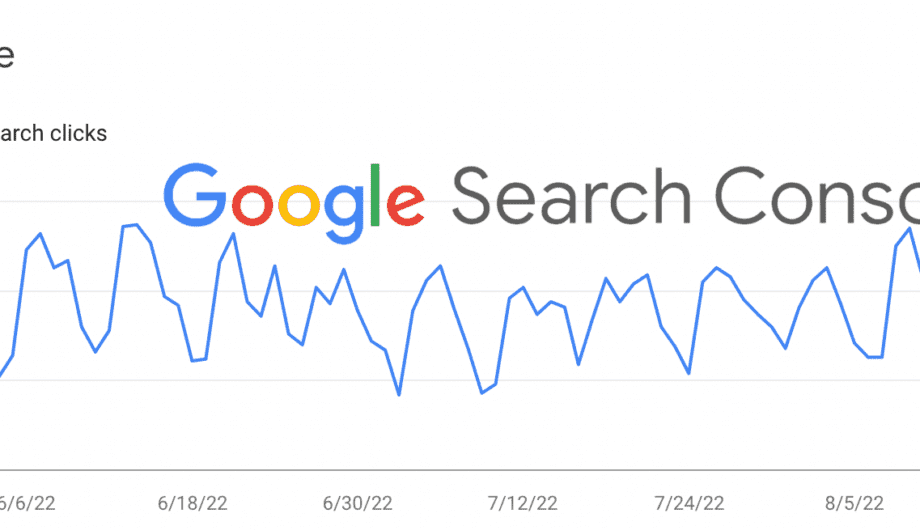

Welcome back to part three in my series about Google Search Console. Feel free to go back and review part 1 and part 2 in the series. Both of the previous posts focused on search results and performance, which to be honest is probably mostly what you’ll use it for. But there are a lot of other tools you might find useful to, so today we’re going to move on and learn more about those.

Index

The next section I’m going to talk about, and probably ALL I’ll cover in today’s post, is the Index section. When it comes to Indexing, what that means is what pages are in the Google search results, what pages aren’t, and why.

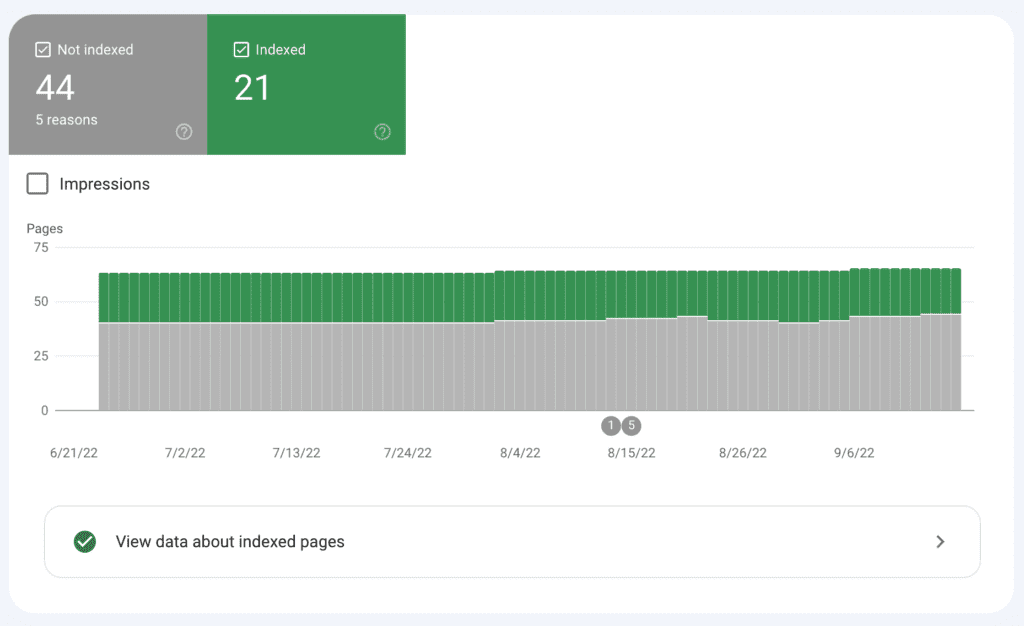

The first option you see is pages. When you click on that, it will show you all the pages that Google is aware that your site has, and how many are indexed and how many are not. So let’s look an example.

When you look at this example, you can see that there are 44 pages not indexed, and 21 pages indexed. And if this is your site, you might get worried. Why are all my pages not being indexed?

If you’ve submit a sitemap to Google (which we’ll get to in this post), then Google knows exactly how many pages are in your site. However, there are a number of reasons why some of them may not be indexed. And when I say ‘page’ here, that could mean a page, a post, an event, any custom post type. So understand that a page may not be a page but it’s counted as a page.

Why might some of your pages not be indexed?

There are a lot of reasons why some pages may not be indexed. The most obvious is that maybe you don’t want them indexed and you’ve set certain pages in your site to not be indexed. There are lots of reasons you might do this. Maybe you have specific landing pages that are for specific purposes, but aren’t for the general public. Like a page someone sees after they submit your form, or a special page they get to after signing up for your newsletter. Or special landing pages for your ad campaigns.

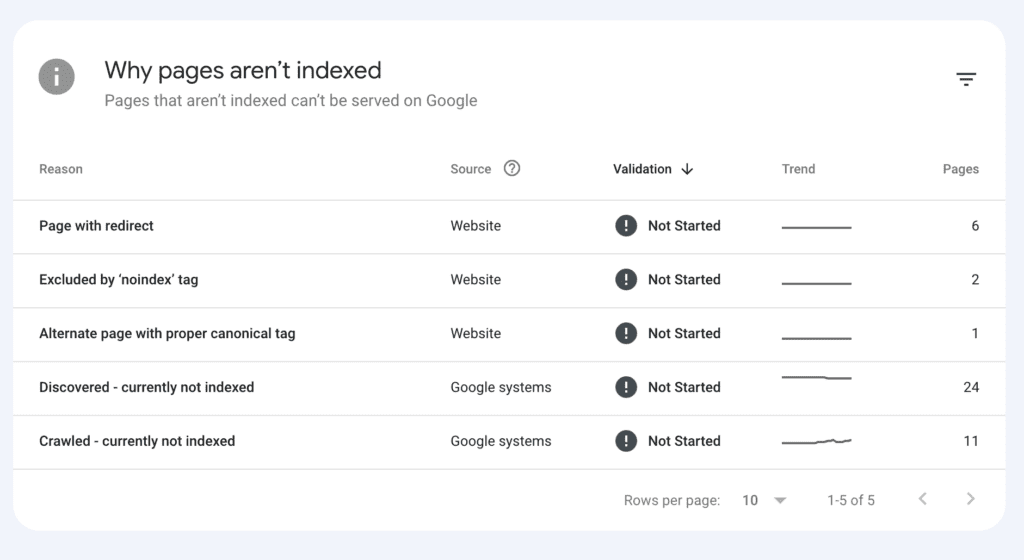

So let’s dig deeper because Google search console will tell you exactly what pages aren’t being indexed and why. Here’s what it’s telling me about this particular site.

So we have five reasons why pages aren’t being indexed.

- Page with redirect: This means that there is a page in the site that is being redirected to another page. This happens a lot if you have content that gets outdated or you’ve deleted an old page or post, and you’ve created a new or updated page or post. Always redirect the old content to the new content. It helps people find the right information, and prevents error pages.

- Excluded by ‘noindex’ tag: This means that your site is telling Google not to index that page. It’s always a good idea to check to see if the pages it shows you are ones that you actually do want to be not indexed. One time a client had accidentally marked their entire site as noindex. Whoops! In this case, there are only two and when I look, those are pages we intentionally want to keep out of the search results.

- Alternate page with proper canonical tag: A canonical link is a way to prevent duplicate content by telling Google which is the preferred content. Why might this happen? There are a lot of ways. One of the more comment reasons would be if your page and a page on another site are sharing the same content. Maybe you are both sharing content about a shared event, for example. Using a canonical link tells Google which page to index of that content. It helps avoid duplicate content and is less confusing to the search engine spiders.

- Discovered – currently not indexed: This means that Google is aware of these pages, but for some reason or another, they aren’t indexed. Often times you’ll see this with new sites when you submit a sitemap. They know the pages are there, but they haven’t been crawled or indexed.

- Crawled – currently not indexed: This means Google knows about the pages, has crawled the pages, but has decided not to index them. There are many reasons why that might happen. Duplicate or thin content, dynamic urls that aren’t useful, private content (meaning the site is public, but the content is restricted. If you have a membership site, this would be common.)

So what do you do?

The neat part of this is that you can click on each subsection and see exactly which pages are being classified into which area, and you can double check to see if that’s accurate. For example, when I click on the Excluded by ‘noindex” tag on this particular site, I see two pages that I set intentionally not to be indexed. So in that case, I do nothing. The place I would start is with the Crawled – currently not indexed section because I would want to know why certain pages are not being crawled.

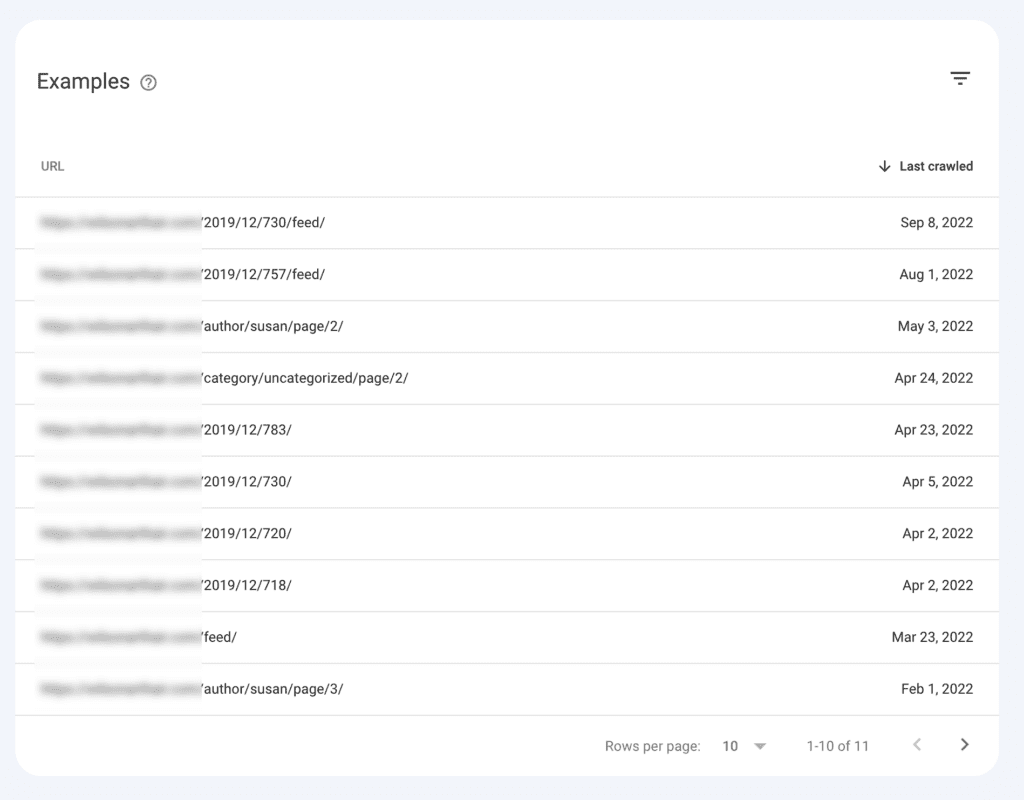

For this site, when I click through, I can see all the pages that call into that category.

I have blurred the URL to keep this account anonymous, but when I look at the results, what I see are archive pages of blog posts, mostly by date but a few by category or author. And this makes complete sense for this site. The date archives don’t have a lot of useful data, they aren’t optimized, etc. They are just archives of posts from those dates. On this site, these are the default archive pages. They aren’t found anywhere in the site, so one reason they probably aren’t being indexed is because they aren’t being linked to anywhere. (Internal links matter, folks.)

So in this situation, this is not at all concerning to me. I can choose to either optimize and design those pages, or leave them unindexed.

More often than not, this will be the reason pages are not indexed. But if you see pages that you do want indexed showing up in this list, then you should look at those pages and try to figure out why.

The other one I would really want to look into is the Discovered – not currently indexed. Usually this happens on new sites. You’ve submit that sitemap, they know about the pages, but they haven’t been crawled yet and they haven’t been indexed. That’s pretty normal and should resolve itself in time, especially if you have good content and your internal linking structure is good.

However, sometimes you may need to work to get some pages indexed. I had a client who had several very long, very high quality blog posts that weren’t getting crawled and this is problematic. It took several indexing requests to Google to finally get those URLs off the discovered list and into the index list.

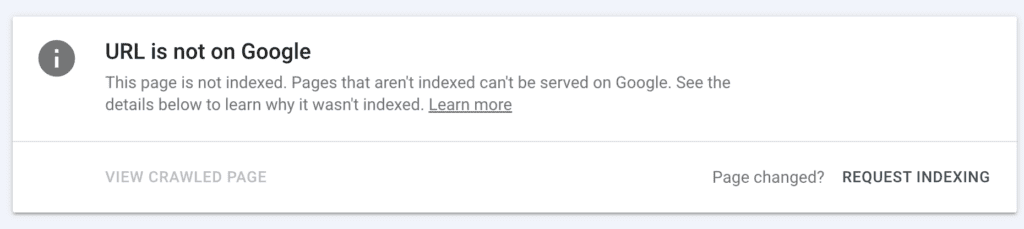

If a page you know is good, has good content, is indexable, and is linked in your site is getting flagged in this area, you can use the URL Inspection tool to request that page be indexed.

In Google Search Console, you can go to the URL Inspection tool, paste in the URL that isn’t being crawled, and then click on that Request Indexing link to try to push Google to index that page. This can give Google a little nudge to get it done faster than they maybe otherwise would have.

Other reasons why you may have a page not being indexed could be issues with your mobile friendliness, site speed, or SSL certificate. So check on all those things and make sure you don’t have any issues.

Knowing if your pages are indexed or not, and how to tell which ones and why, is a huge step toward making sure that your site is being found. Google Search Console is the best resource for this information.

Sitemaps

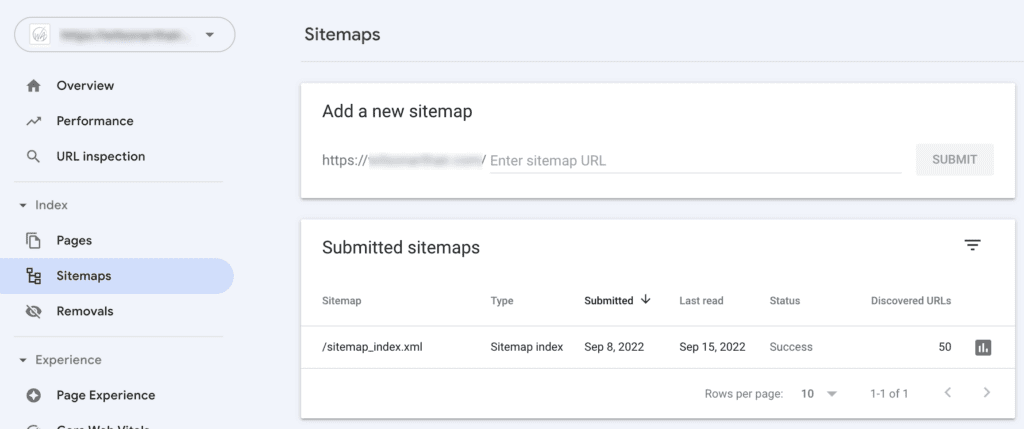

Finally I want to talk about the sitemap tool, because this one is super important. The very first thing you should do after you launch your site and set up Google Analytics, is submit your sitemap to Google. This is Google’s roadmap to all your site’s pages and if you want to get found, then you have to tell Google where those pages are.

When you go to Sitemaps in Google Search Console, you have the option to add a new sitemap and an option to see already submitted sitemaps. Keep in mind that this is an XML sitemap, not a fancy one that your users will see. This is a sitemap designed for search robots to be able to use. If you use Yoast SEO, you’ll get an automatic sitemap as soon as you activate the plugin which is super useful. If you don’t use Yoast, then you may have to install a separate Sitemap plugin in your site.

For this site, there’s one that was already submit successfully with 50 URLs discovered. If there wasn’t one submit, I would want to add the details for the URL in the first box, and then hit submit.

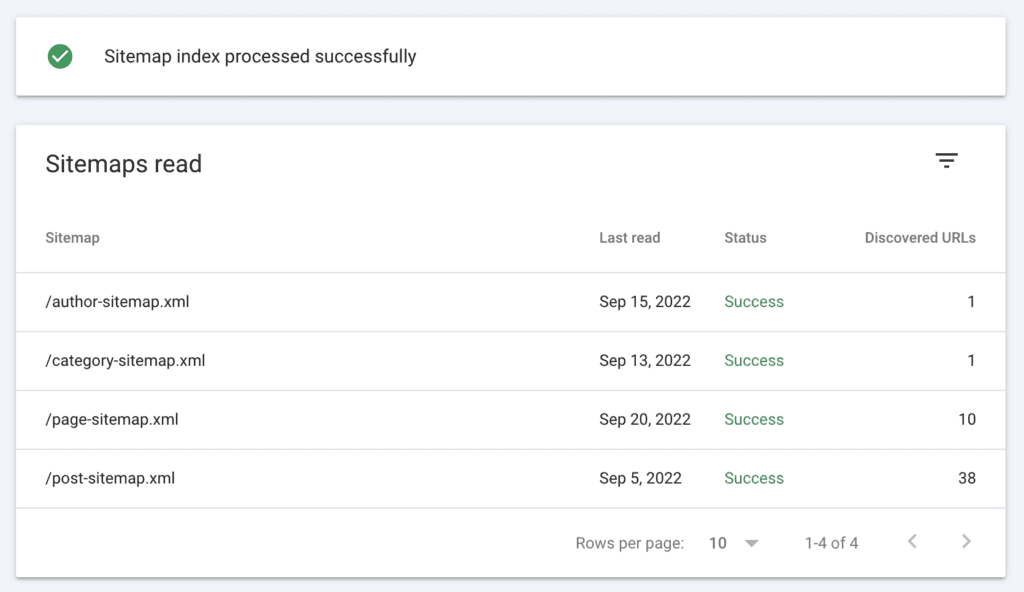

You can click through on the sitemap that’s already been submit to see more details about it.

For this sitemap, there is an author sitemap, which is a list of posts by author. The category sitemap, which is a list of categories in the site. The page sitemap, which is a list of pages in the site, and then the post sitemap, which is a list of posts in the site.

If you don’t have a blog, then some of these might not apply and you might only have the pages sitemap. But you absolutely need to submit this information to Google if you want to get your pages into the search results.

Having a sitemap also helps for when you add new content to your site, because Google always knows when you have a new blog post, a new category, a new page.

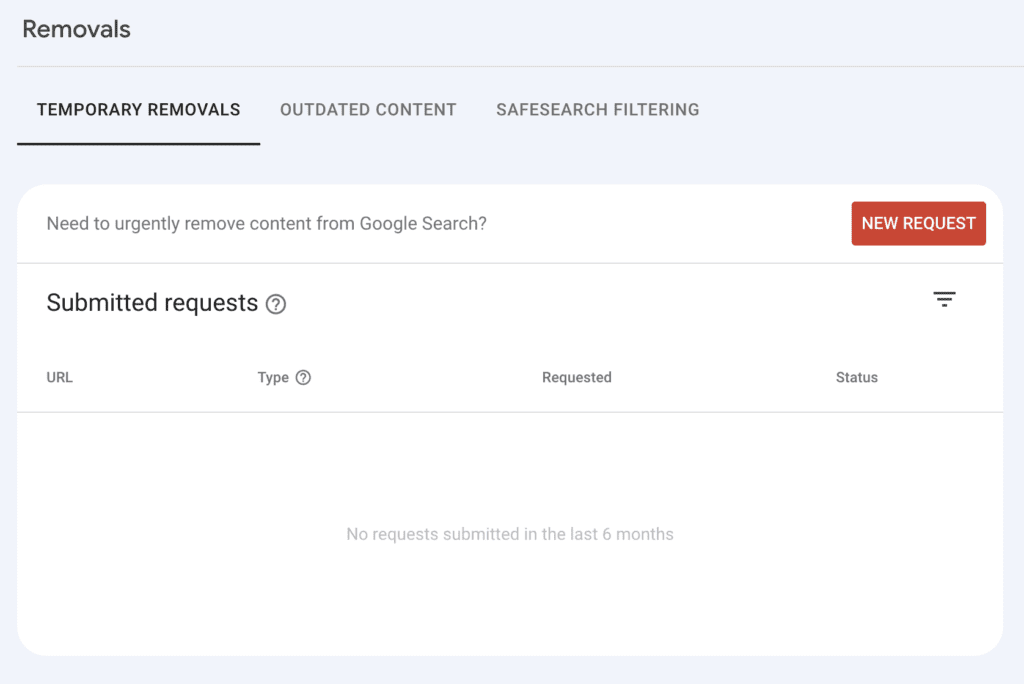

The last section of the Index portion of Google Search Console is the Removals area.

Removals

A removal is when you are requesting that Google remove a URL from the search results. Why would you want to do that, you might ask? There are a lot of reasons.

- You have outdated content that you no longer want to be found.

- You have deleted content and you want the URLs removed from the search results.

- You had some private pages get indexed that you don’t want to be found.

- You’ve changed your services and/or products and you want those pages removed from the search results.

- You got hacked and a bunch of malicious URLs are in the search results, damaging your SEO.

Whatever reason, there may come a time when you want to have a URL removed, and the Removals area is where you go to do that. You can remove individual URLs, or URLs with only certain prefixes.

If I wanted to remove a URL, I could click on the New Request and add in the URL in question and get it removed. Usually this happens fairly quickly. One time I whipped up a staging site for a client and forgot to turn off indexing. A simple mistake, but that site got into the search engines and we didn’t want that site to be found, so I used this tool to request all those URLs be removed.

If you turn off indexing, or delete the pages, the URLs will eventually drop out. But the removal tool will get them out faster.

That’s all for today’s Google Search Console Guide. I’ll be back in another week or two with part 4!

Amy Masson

Amy is the co-owner, developer, and website strategist for Sumy Designs. She's been making websites with WordPress since 2006 and is passionate about making sure websites are as functional as they are beautiful.

This guide to Google Search Console is invaluable! Part 3 beautifully navigates advanced features, from URL inspection to performance reports. Clear explanations make optimizing web presence a breeze. Can’t wait to implement these strategies and elevate my site’s visibility. For more valuable resources and services similar to our discussion.